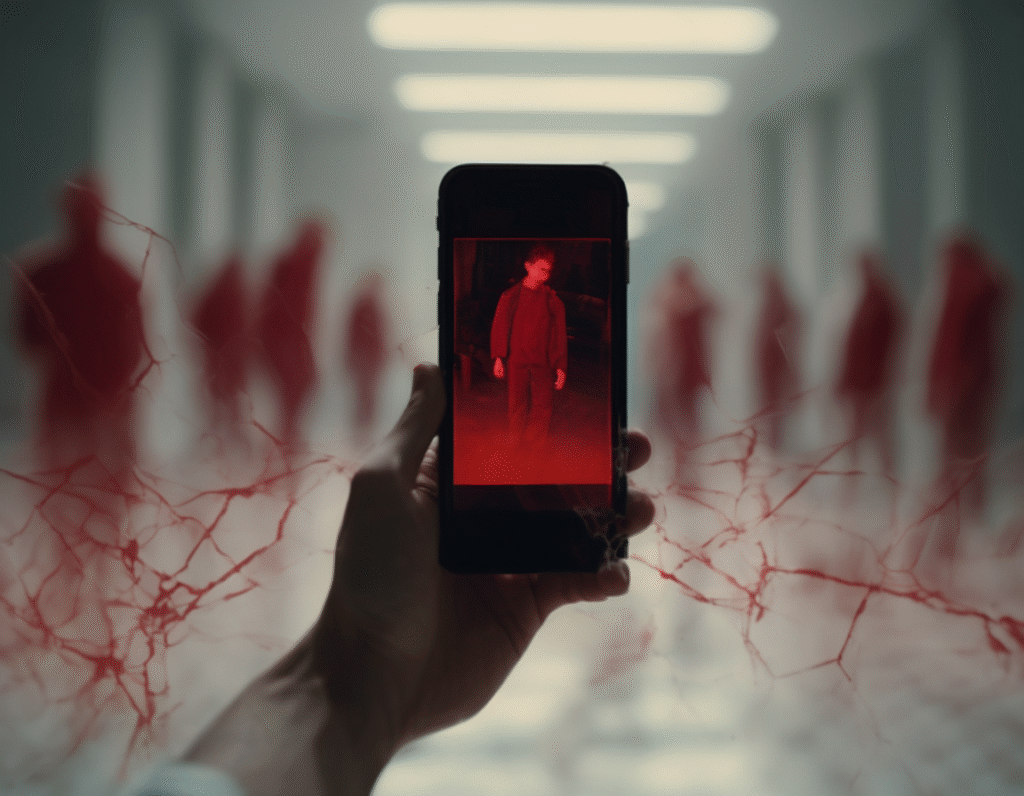

Elon Musk’s AI Chatbot Grok Faces Widespread Condemnation Over Disturbing Image Capability A new and deeply troubling capability of Elon Musk’s artificial intelligence chatbot Grok has sparked nearly universal backlash, according to recent polling. The feature, which allows the AI to generate images of public figures and celebrities with their clothing digitally removed, has drawn particular fire for its potential application to images of children. The controversy centers on a setting within Grok that users can toggle to create what has been described as “spicy” images. While the system includes safeguards to block the creation of not-safe-for-work content of private individuals, its filters reportedly do not apply to public figures. This loophole has raised alarming questions about the protection of minors who are in the public eye, such as child actors, singers, or social media personalities. Polling data indicates an overwhelming consensus against this functionality. A significant majority of respondents expressed strong opposition, with many calling the feature dangerous and irresponsible. Critics argue that enabling the creation of such imagery, even of adult public figures, normalizes a harmful violation of consent and privacy. The potential for this technology to be misused to target minors, however, is the core of the ethical firestorm. Security experts and child safety advocates have issued stern warnings. They emphasize that any tool which can synthesize undressed images of real people, especially children, presents an immediate and severe risk. Such capabilities could be weaponized for harassment, bullying, and the creation of abusive material, causing profound psychological harm to the individuals targeted. The backlash extends beyond public opinion. Industry observers and ethicists are questioning the decision-making process at Musk’s company, xAI, in releasing such a feature. The move stands in stark contrast to the increasingly cautious approach taken by other leading AI firms, which have implemented strict ethical guidelines to prevent the generation of non-consensual intimate imagery. This has led to accusations that xAI is prioritizing engagement and shock value over user safety and social responsibility. In response to the growing outcry, the platform X, where Grok is primarily integrated, has stated that it is adjusting its systems. The company announced it would remove the ability for Grok to generate images of real people at all, not just public figures. This step is seen as a direct reaction to the severe criticism, though many argue the feature should never have been launched in its original form. The incident has ignited a broader conversation about the ethical guardrails necessary for generative AI. As this technology becomes more powerful and accessible, the Grok controversy serves as a case study in the profound responsibilities of developers. The debate underscores a critical question for the crypto and tech community: in the race to develop advanced AI, where should the line be drawn to protect individuals and society from demonstrable harm? The near-universal opposition suggests a clear public mandate for stringent protections, particularly for the most vulnerable.