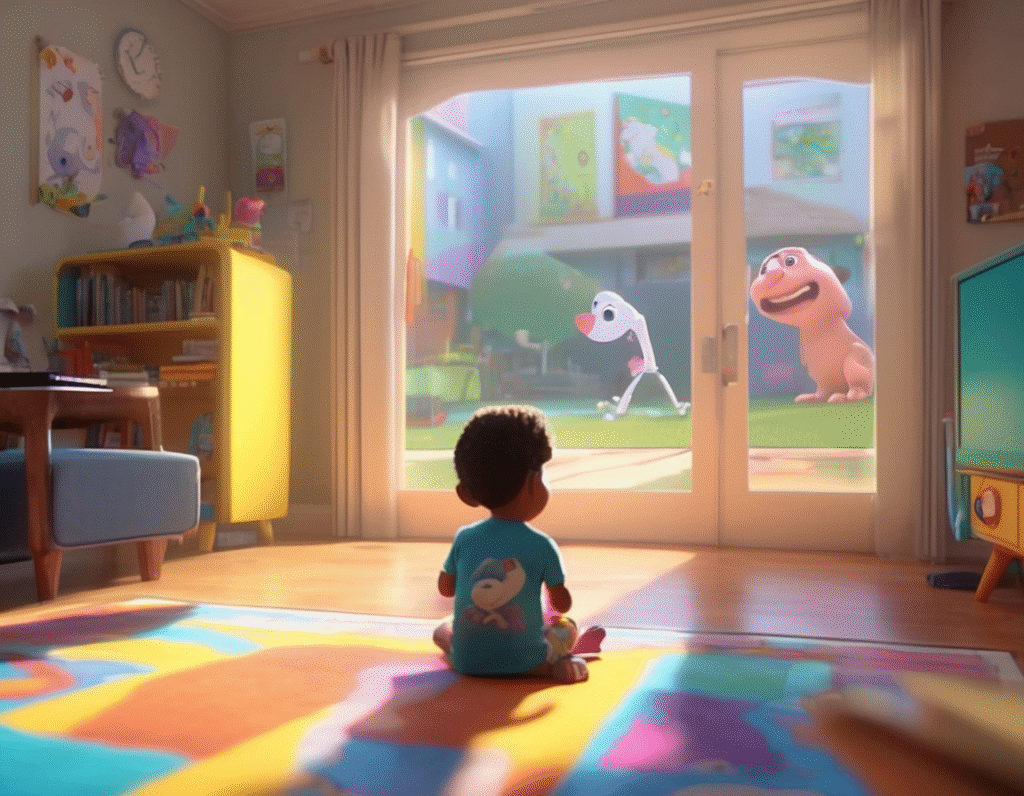

A Troubling New AI Trend Floods YouTube With Dangerous “Educational” Content For Kids A new and deeply concerning form of AI-generated content is proliferating on YouTube, targeting young children with dangerously inaccurate videos disguised as educational material. These videos, often featuring familiar characters or bright, simple animations, are being algorithmically pushed into kids’ feeds, where they deliver shockingly bad and potentially harmful advice. The issue represents a massive scaling of low-quality, synthetic media. One observer aptly described the phenomenon as “toddler AI misinformation at an industrial scale.” The videos are churned out using generative AI tools, combining auto-generated scripts, synthetic voices, and often stolen or AI-made visuals to create a facade of legitimate educational content. The danger lies in the specific, hazardous falsehoods these videos promote. Investigations have found AI slop videos instructing young viewers to engage in life-threatening behaviors. In one egregious example, a video presented as a lesson on traffic safety actively encouraged children to play in the middle of busy roads. Other examples include nonsensical counting exercises, bizarre and incorrect science facts, and disturbing narratives woven into what appears to be innocent cartoon content. This poses a unique threat because the platform’s youngest users are inherently vulnerable. They lack the critical thinking skills to identify synthetic media or question absurd instructions. Furthermore, parents often rely on YouTube’s Kids platform or curated playlists, assuming a baseline level of safety and quality control that these AI videos completely bypass. The familiar animation style and placement alongside legitimate content can trick both children and supervising adults. For the crypto and web3 community, this explosion of AI slop highlights critical issues at the intersection of technology, trust, and content provenance. It underscores the growing problem of digital trust erosion in a fully generative online environment. When any entity can flood a platform with unlimited, automated, engagement-optimized content, it breaks the foundational models of content moderation and quality assurance. The situation on YouTube mirrors challenges discussed in decentralized circles regarding spam, sybil attacks, and identity verification. It raises urgent questions about how platforms, or potentially future decentralized protocols, can authenticate the origin and integrity of content, especially when targeting vulnerable populations. The economic incentive is clear for bad actors, as these videos generate advertising revenue through YouTube’s monetization system, creating a financial engine for mass-producing harmful slop. Ultimately, this is not just a content moderation failure but a demonstration of how generative AI can be weaponized for attention farming at the direct expense of public safety. It serves as a stark warning about the tangible, real-world harms of unregulated synthetic media ecosystems. As AI generation tools become more accessible, the volume of such content will only increase, demanding new solutions for content verification and platform accountability to protect the most impressionable users online. The crypto world’s ongoing work in areas like verifiable credentials and decentralized reputation systems may eventually offer part of the technical answer to this growing crisis.