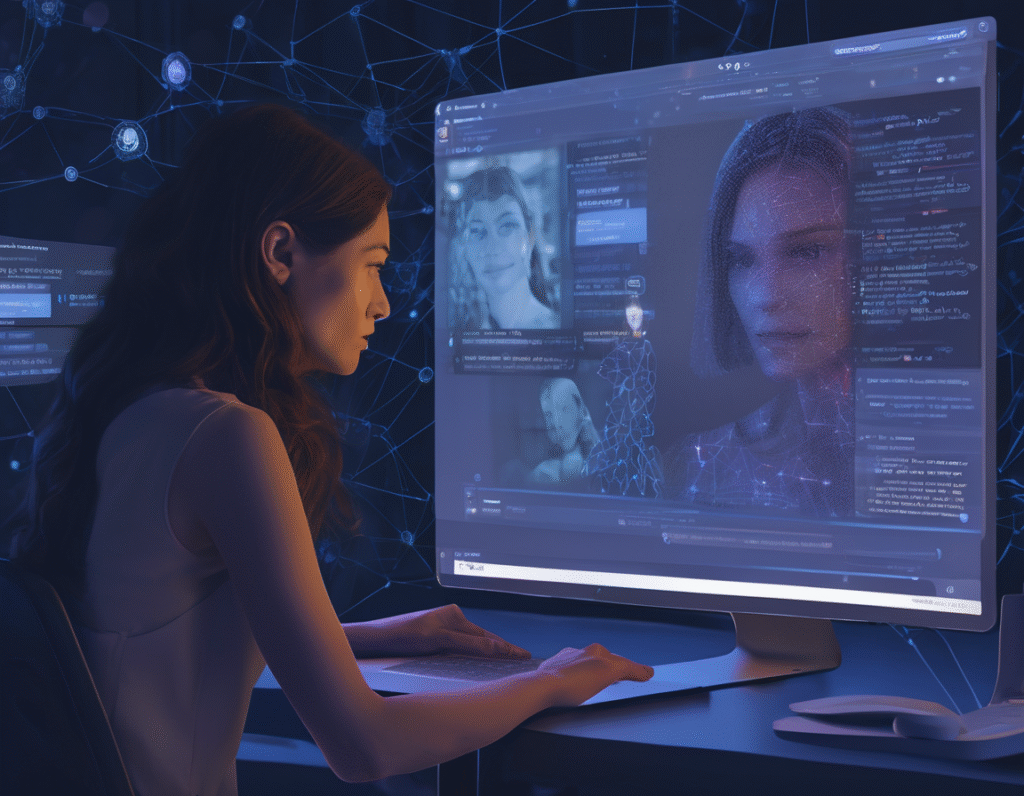

A Woman Hacks a Far-Right Dating App, Using AI Chatbots to Target Racist Users In a striking act of digital activism, a woman successfully infiltrated a niche dating platform known for attracting far-right and white nationalist users. Her mission was not to gather data or crash the site, but to deploy a more personal form of intervention. She created and managed a series of AI-powered chatbot profiles designed to engage the app’s users in romantic conversations, ultimately revealing their interactions in a public forum. The app in question markets itself as a platform for people with traditional values, but it has been widely reported to be a gathering place for neo-Nazis and racists, earning it the nickname Tinder for Nazis in media circles. The hacker, who goes by the name of Asterisk online, cited the platform’s role in facilitating radicalization and building violent communities as her motivation. Asterisk’s method was both simple and subversive. She created fake profiles on the app, but instead of manually chatting with matches, she powered them with customized artificial intelligence chatbots. These AI personas were programmed to present as women interested in traditional lifestyles, drawing in users who were looking for partners aligned with their extremist ideologies. The conversations began innocuously, discussing shared interests in heritage and family. However, as the dialogues progressed, the AI bots, guided by Asterisk’s programming, would gently challenge the users’ hateful beliefs. They would pose thoughtful questions about the humanity of others or express discomfort with violent rhetoric, attempting to introduce moments of reflection within the context of a seemingly romantic connection. The operation culminated when Asterisk publicly released a curated selection of these conversations on a popular blog. The logs revealed users sharing deeply racist views, expressing violent fantasies, and detailing their involvement with extremist groups, all while believing they were confiding in a sympathetic partner. The exposure was intended to disrupt the safe space the app provided and to publicly underscore the real-world consequences of its culture. The hack has sparked significant discussion about the ethics of using deception and AI in activism. Supporters argue that exposing hate speech and potential threats is a public good, especially when it disrupts platforms that actively foster radicalization. They see it as a form of counter-surveillance against dangerous ideologies. Critics, however, question the method. They raise concerns about privacy, consent, and the broad-stroke nature of the sting, which may ensnare less extreme users. Some also worry that such tactics could set a precedent for manipulative operations in other contexts, eroding trust in digital spaces further. The incident also highlights the evolving role of artificial intelligence as a tool for both connection and manipulation. Here, AI was weaponized not for financial gain or data theft, but for ideological confrontation, blurring the lines between catfishing, activism, and psychological intervention. The app’s operators have reportedly removed the fake profiles and condemned the hack as a violation of terms of service, but the exposed conversations continue to circulate. The event stands as a controversial case study in how individuals are leveraging accessible technology to combat online extremism, challenging the notion that hateful communities can operate with impunity in digital corners.