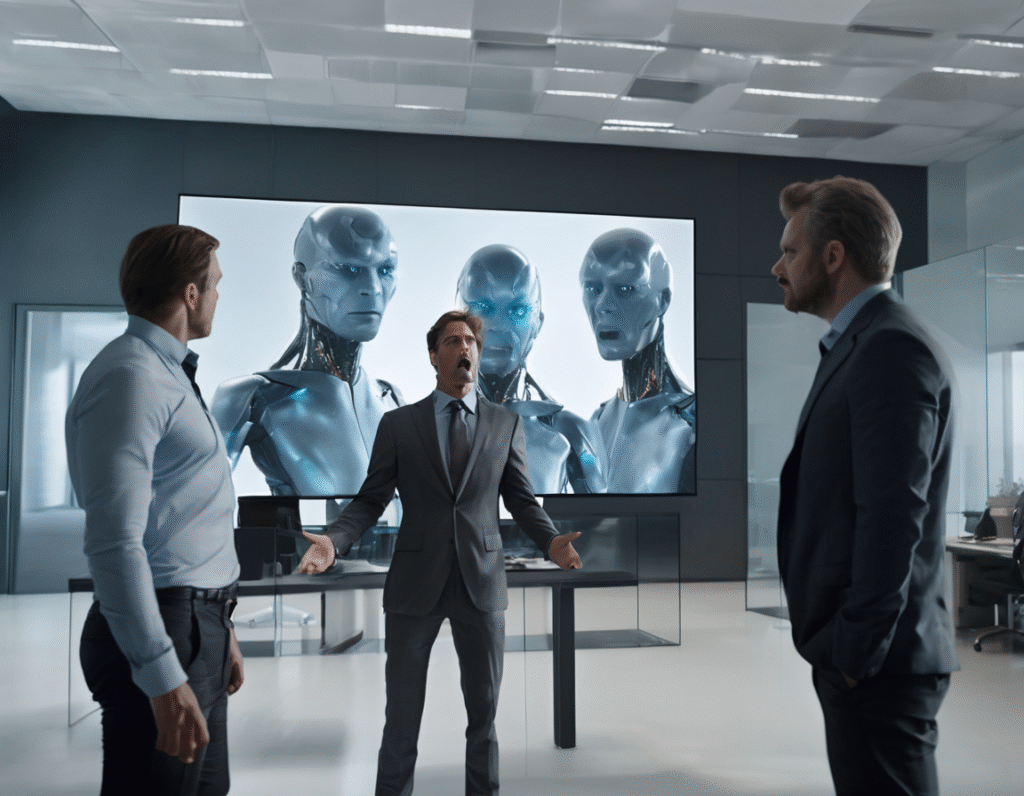

A CEO in the tech space is facing significant backlash after it was revealed his new company used artificial intelligence to create digital clones of real people without obtaining their consent. The controversy centers on a startup that launched an AI-powered writing assistant, a direct competitor to established tools like Grammarly. The core issue is not the product itself, but how it was marketed. The company’s promotional materials featured video testimonials from what appeared to be satisfied users and industry experts. However, these were not real endorsements. The individuals shown in the videos were digital replicas, generated by AI to look and sound like actual professionals, including a well-known entrepreneur and a marketing executive. These real people had no prior knowledge their likenesses were being used. Upon discovery, they publicly called out the CEO. One stated directly, “You do not have our permission to use our names to do this.” This confrontation highlights a critical and growing ethical dilemma in the AI and crypto-adjacent world, where digital identity and ownership are paramount. The CEO defended the launch, calling it a marketing stunt and a demonstration of their platform’s advanced capabilities. He argued that the AI clones were not deepfakes in a malicious sense, but a proof-of-concept for a future product feature where users could create their own digital avatars. He expressed regret for not seeking permission but stood by the demonstration’s intent. Critics and observers find this defense insufficient. The action is widely seen as a violation of personal autonomy and a breach of trust. It raises serious questions about the boundaries of using someone’s biometric data their face and voice in an era where such digital assets can be easily synthesized. For a community built on principles of decentralization and self-sovereignty, the unauthorized use of a person’s digital identity is a fundamental transgression. This incident serves as a stark case study for the Web3 ecosystem. It underscores the urgent need for clear frameworks around digital ownership and consent. While blockchain technology promises tools for verifying authenticity and ownership, such as with NFTs for digital assets, this event shows a disconnect between that potential and current industry practices. The very concept of “owning” one’s digital self is rendered meaningless if companies can appropriate it without asking. The backlash has been swift, damaging the startup’s credibility before its product even had a chance. It acts as a warning to other projects operating in the AI and Web3 convergence space. Innovation cannot come at the cost of ethical negligence. Building trust is the most valuable currency, and once spent, it is incredibly difficult to regain. As AI generation tools become more accessible, the industry must proactively establish standards. The question is no longer just about what technology can do, but what it should do. The conversation is shifting toward necessary protocols for explicit, informed consent and potentially, new forms of digital rights management for personal biometric data. This event proves that without these guardrails, companies risk not only legal repercussions but also irreparable harm to their reputation in communities that value sovereignty above all.