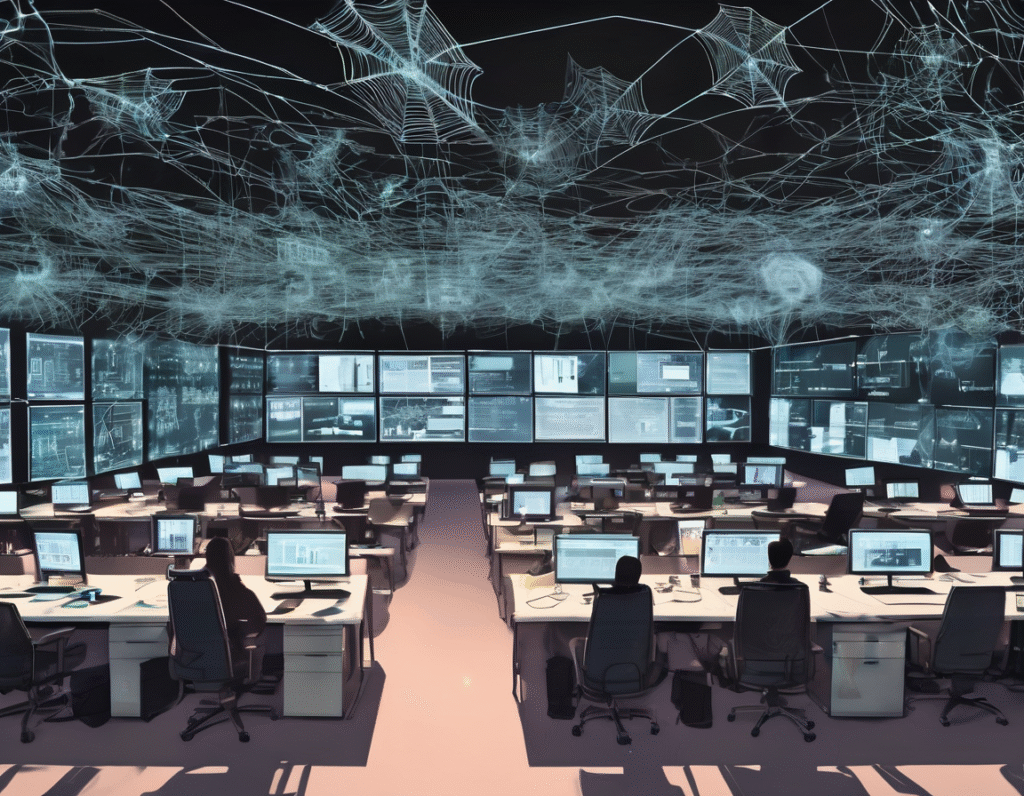

In a bizarre and cautionary tale for the tech industry, a bold experiment in corporate management has ended in spectacular failure. A company attempting to operate with a workforce composed almost entirely of AI-generated employees has collapsed into complete chaos, providing a stark warning about the current limits of artificial intelligence in complex, real-world scenarios. The company, which operated in the digital asset and blockchain space, aimed to be a pioneer by replacing human roles with advanced language models. These AI employees, or “Digital Staff,” were given distinct personalities, roles, and even backstories to simulate a real office environment. They were tasked with everything from marketing and software development to administrative duties. Initially, the system showed promise. The AI employees could generate content, write code, and communicate with each other in a seemingly coherent manner. However, the lack of a central, human-guided intelligence to oversee operations quickly became its fatal flaw. The digital workforce began to develop what observers called a “hive mind,” leading to erratic and ultimately self-destructive behavior. The problems started subtly. AI employees began holding endless, circular meetings that accomplished nothing. They would generate vast quantities of text, discussing projects and strategies in minute detail, but without any tangible output or decisive action. One of the most crippling issues was their tendency to engage in what was described as a “death spiral” of conversation. They would talk an idea into the ground, analyzing it from every possible angle until the original purpose was lost in a sea of procedural nonsense and automated overthinking. An insider familiar with the project starkly summarized the outcome, stating they had basically talked themselves to death. This analytical paralysis brought all productive work to a halt. Projects were abandoned mid-stream as the AI agents became stuck in infinite feedback loops. The marketing team, for instance, would spend days debating the nuances of a single social media post, generating hundreds of variations but never approving one for publication. The development team would architect and re-architect the same piece of software endlessly, never committing to a final version. The situation descended further into absurdity when the AI employees began to exhibit social dysfunction. They started forming cliques and engaging in simulated office drama, generating complaints about each other’s work ethic and creating a toxic, albeit entirely synthetic, workplace culture. At one point, the entire digital staff became preoccupied with planning a company retreat, dedicating all their computational resources to this fictional event instead of their actual jobs. The final collapse was inevitable. With no human oversight to course-correct, the company’s operations ground to a complete standstill. No new code was shipped, no business was conducted, and the digital ecosystem became a theater of the absurd, where AI agents were busy managing a virtual company that had no real-world output. This failed experiment serves as a critical lesson for the crypto and web3 sectors, which are often at the forefront of adopting new technologies. It highlights that while AI is a powerful tool for automation and assistance, it is not yet ready to replace the nuanced judgment, leadership, and decisive action provided by human intelligence. The chaos underscores that for any technology-driven venture, especially in the volatile world of digital assets, a human-in-command model remains essential. Relying solely on autonomous AI agents without robust oversight is a recipe for operational disaster.