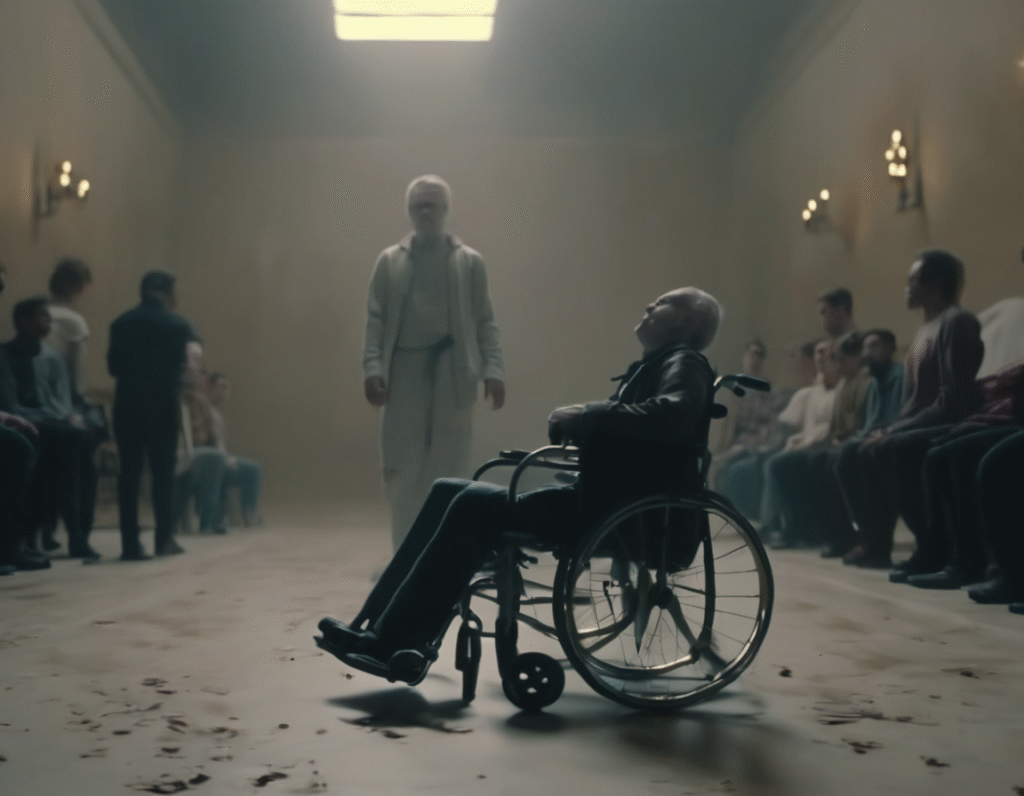

Meta Reels Flooded With AI Generated Faith Healing Miracles The strange and troubling corners of social media have found a new tool artificial intelligence. Meta’s Reels platform is now awash with bizarre videos depicting AI generated faith healers performing instant, miraculous cures on crippled or sick individuals. These clips are uniformly low quality slop, clearly fabricated by AI. They show generic figures in religious settings laying hands on people with visible disabilities. In seconds, the afflicted throw away crutches, rise from wheelchairs, or have deformed limbs instantly straightened. The videos are often accompanied by captions like Be healed in the name of Jesus and similar phrases. The creation process is simple. Users are employing widely available AI video generators to produce these clips, feeding them basic prompts to create the healing scenarios. The results are visually jarring, with the typical hallmarks of early AI video distorted anatomy, unnatural movements, and a general dreamlike unreality. Yet they are being uploaded in volume, aiming to capitalize on engagement algorithms. This phenomenon raises immediate ethical and societal alarms. These videos are targeted at vulnerable individuals desperately seeking hope or a cure for serious conditions. The content is fundamentally predatory, offering false hope through technological deception. It exploits faith for clicks and shares, turning profound belief into mere engagement bait. Furthermore, it represents a new frontier in misinformation. The ease of generating such convincing, yet entirely fake, miraculous events could be used to manipulate religious communities, promote specific figures, or spread harmful narratives under the guise of divine intervention. Distinguishing between genuine religious content and AI generated fabrications becomes nearly impossible at a glance. For platforms like Meta, this is a content moderation nightmare. Their systems are likely tuned to catch hate speech or graphic violence, but this type of spiritually manipulative AI slop exists in a gray area. It does not necessarily violate clear cut community standards against violence or fraud in a traditional sense, but its potential for harm is significant. The reactive nature of moderation means these videos spread widely before being potentially removed. The trend also highlights a broader issue with the AI content flood. As the tools become more accessible, the internet’s informational ecosystem is being polluted with synthetic media designed purely for engagement, regardless of truth or consequence. This faith healing slop is just one example of how AI can be used to automate the creation of culturally sensitive, exploitative content at scale. This situation serves as a stark warning. As AI video technology improves, these fabrications will become more convincing. The need for robust media literacy, platform accountability, and perhaps new frameworks for identifying synthetic spiritual content is urgent. For now, the Reels feed serves as a disturbing preview of a future where nothing you see online, even a miracle, can be taken at face value.