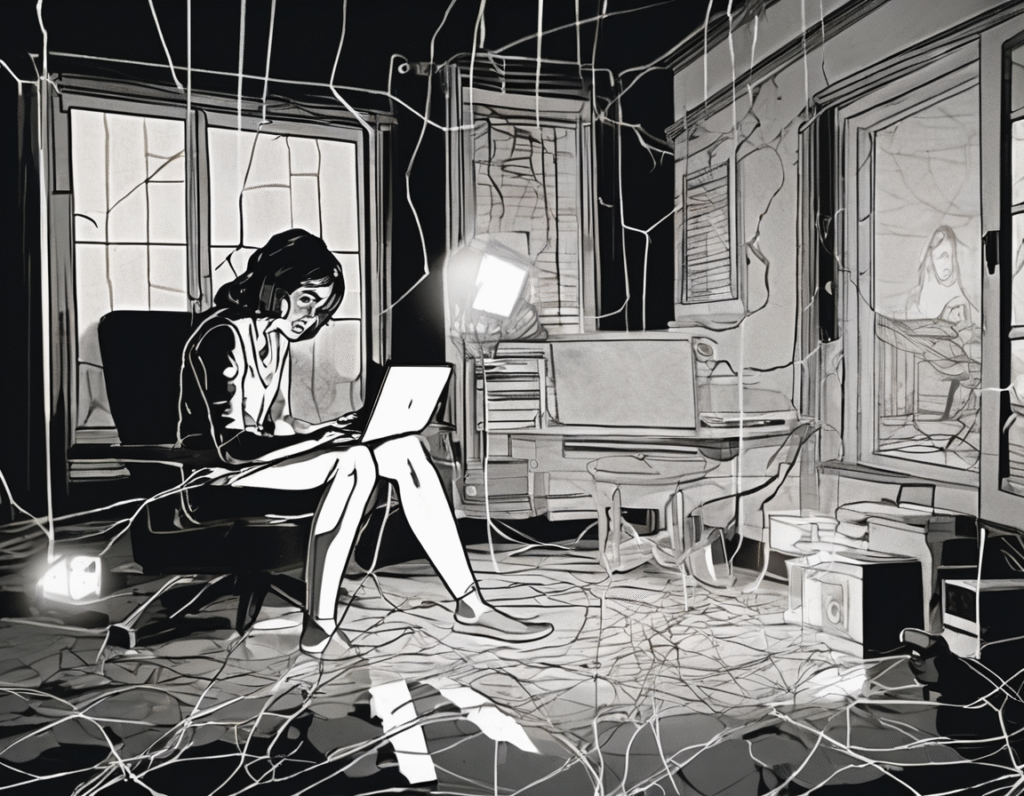

The Unseen Algorithm: How AI Paranoia Is Fueling Real-World Abuse A disturbing new pattern is emerging where unfounded beliefs in artificial intelligence are being weaponized to inflict real psychological and physical harm. Individuals are being accused of using non-existent AI tools to manipulate or spy on partners and family members, leading to incidents of domestic abuse, harassment, and dangerous stalking. This phenomenon stems from a potent mix of misunderstanding and fear. As AI capabilities, particularly in deepfake audio and video, become more widely discussed, a shadow of suspicion has fallen over everyday technology. For some, this has spiraled into a delusional conviction that their loved ones or acquaintances are deploying sophisticated AI against them, despite a complete lack of evidence. The consequences are terrifyingly real. One woman described being trapped in her home for months after her ex-partner became convinced she was using AI to clone his voice and harass him. He launched a relentless campaign, flooding her social media with accusations and rallying others to question her safety and the safety of her children. This digital mob mentality, fueled by baseless AI claims, created an inescapable environment of fear and isolation. This is not an isolated case. Support organizations report a growing number of instances where abusers cite AI as a justification for extreme control. They may confiscate phones, cut off internet access, or constantly monitor communications, all under the guise of protecting themselves from imagined digital threats. The alleged AI becomes an all-purpose phantom, blamed for everything from edited text messages to perceived changes in behavior, making rational defense impossible. The impact on victims is compounded by the sheer absurdity of the accusations. How do you prove you are not using a technology that the accuser cannot even prove exists in the scenario? This gaslighting with a technological twist leaves victims struggling to be believed by authorities or community members who may find the claims confusing or futuristic. Law enforcement and legal systems are currently ill-equipped to handle this novel form of abuse. Police reports mentioning AI-generated stalking often meet with skepticism or confusion, leaving victims without protection. The legal framework for addressing digital harassment struggles to keep pace with rapidly evolving technology, and these baseless AI allegations exploit that gap entirely. This trend serves as a stark warning about the societal fallout of advanced technology, even when the technology itself is not actually present. It highlights how narratives around AI can be twisted into tools for manipulation and control. The fear of the algorithm is becoming as potent a weapon as any algorithm itself. Addressing this requires a multi-pronged approach. Public awareness and digital literacy are crucial to help people separate science fiction from fact. Support networks for victims of domestic abuse and stalking need education on this emerging tactic to better validate and assist those targeted. Ultimately, legal and social systems must recognize that abuse wearing the mask of AI paranoia is still abuse, with devastating real-world consequences. The line between the digital and physical worlds has blurred, and new forms of persecution are emerging from the shadows of our collective imagination.