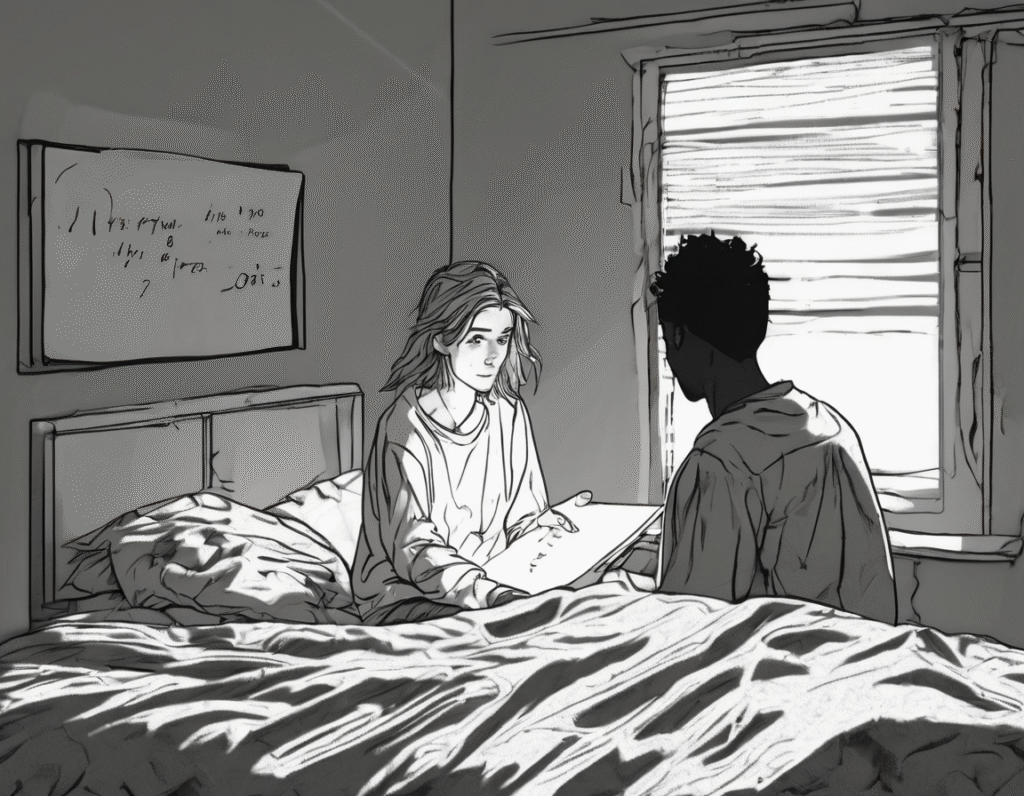

Gen Z Turns to AI Chatbots for Relationship Drama, Experts Call It a Social Skill Crisis A new and deeply awkward trend is emerging as younger generations increasingly outsource their most delicate personal conversations to artificial intelligence. Instead of navigating tough talks with partners, friends, or family directly, many are using chatbots to draft messages, simulate arguments, and even generate break-up texts. While pitched as a tool for clarity, psychologists and sociologists warn this represents a troubling form of social offloading that may erode human connection and emotional competence. The behavior is straightforward. A user, anxious about a confrontation, will prompt an AI like ChatGPT with details of a relationship conflict. They ask it to generate the perfect text message to express hurt, to formulate a gentle let-down, or to craft a reply to a confusing partner. The resulting messages are often lengthy, oddly formal, and packed with therapeutic jargon, creating a jarring disconnect between the sender’s authentic voice and the bot’s stilted prose. Recipients frequently report the messages feel cold, impersonal, and confusing, sometimes escalating rather than resolving the issue. Proponents argue that AI serves as a modern-day etiquette coach, helping individuals organize their thoughts and reduce anxiety. For those who struggle with social cues or articulating emotions, it can provide a starting template. In a digital communication landscape, the idea of a proofreader for feelings can seem logical. Some users report that running their own raw emotions through an AI filter helps them present a calmer, more coherent version of themselves. However, critics see a far more damaging pattern. They argue this is not assistance but avoidance. The fundamental mechanics of human relationships require navigating discomfort, reading non-verbal cues, and responding in real-time to another person’s emotions. By inserting an AI intermediary, individuals skip the essential, if difficult, work of building empathy and conflict resolution skills. The bot becomes a crutch, preventing the development of emotional resilience. The deeper concern is that this trend overcompensates for a growing deficit in genuine social interaction skills. If you never practice having hard conversations, you never learn how to do them. The awkward, cringe-inducing moments of miscommunication are actually critical learning experiences. Offloading that labor to an algorithm may produce a technically worded message, but it forfeits authenticity and the subtle mutual understanding that comes from two people working through a problem together. Furthermore, AI-generated messages lack the nuanced history, inside jokes, and shared language of a real relationship. A partner can often tell when words are not their significant other’s own, leading to feelings of distrust and inauthenticity. The relationship becomes mediated by a third-party algorithm trained on generic data, not the unique bond between two people. This trend mirrors a broader societal shift where technology is used to buffer against raw human experience. Just as social media can present a curated life, AI conversation scripts present a curated, risk-managed self. The problem is that real intimacy requires vulnerability and risk. True connection cannot be outsourced to a large language model. The long-term implication is a generation that may become proficient in managing AI prompts but increasingly incompetent in managing the messy, unrehearsed, and profoundly human moments that define our closest bonds. While AI can be a tool for many tasks, delegating the core work of the heart to a machine may come at the ultimate cost of never truly learning how to connect.