A mysterious graph has taken over AI Twitter. It shows AI capabilities growing faster than expected. But experts say everyone is reading it wrong.

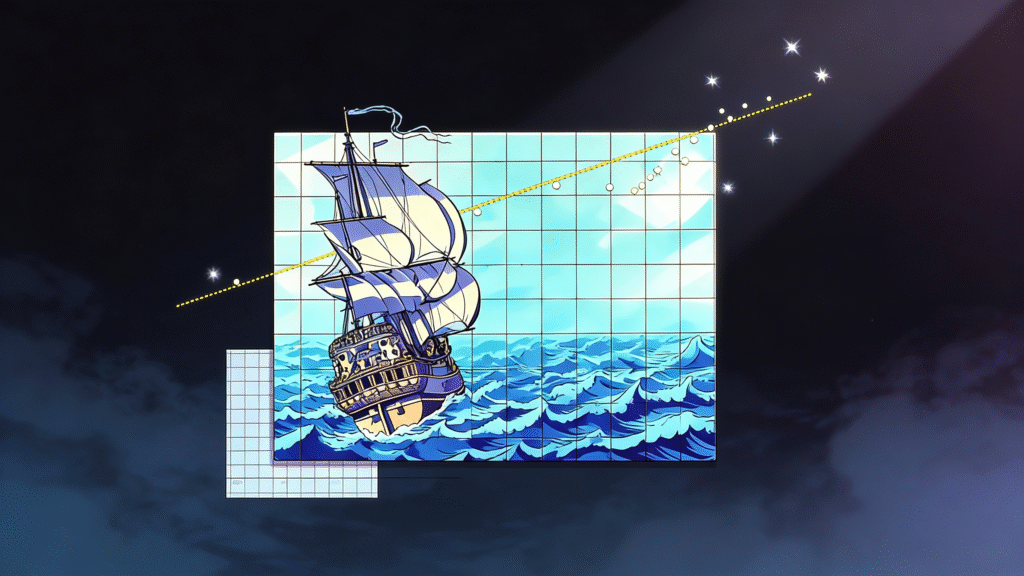

Every time OpenAI, Google, or Anthropic drops a new model, the AI community watches METR’s graph update. The nonprofit research group measures how long AI takes to complete tasks. Their famous plot shows capabilities improving at an exponential rate.

The latest model, Claude Opus 4.5 from Anthropic, shattered expectations. It completed a five-hour task in record time. One safety researcher tweeted, ‘Mom come pick me up, I’m scared.’

But here’s the truth nobody’s talking about.

METR’s estimates have huge error bars. The organization itself warned: Claude might complete tasks taking humans two hours OR twenty hours. We simply don’t know.

The graph doesn’t measure AI abilities overall. It focuses on coding tasks. Just because AI can code doesn’t mean it can replace workers.

METR was founded to assess AI risks. Ironically, their own research found AI coding assistants might actually slow down software engineers.

The exponential plot made METR famous. But the organization seems uncomfortable with how their work gets interpreted.

The takeaway? Progress is real. But hype moves faster than science. Before you panic about AGI destroying jobs, remember: the graph is just one metric, and even the researchers don’t fully understand what it means.