Will Smith AI Concert Fail Exposes Deepfake Dangers and a New Era of Synthetic Reality

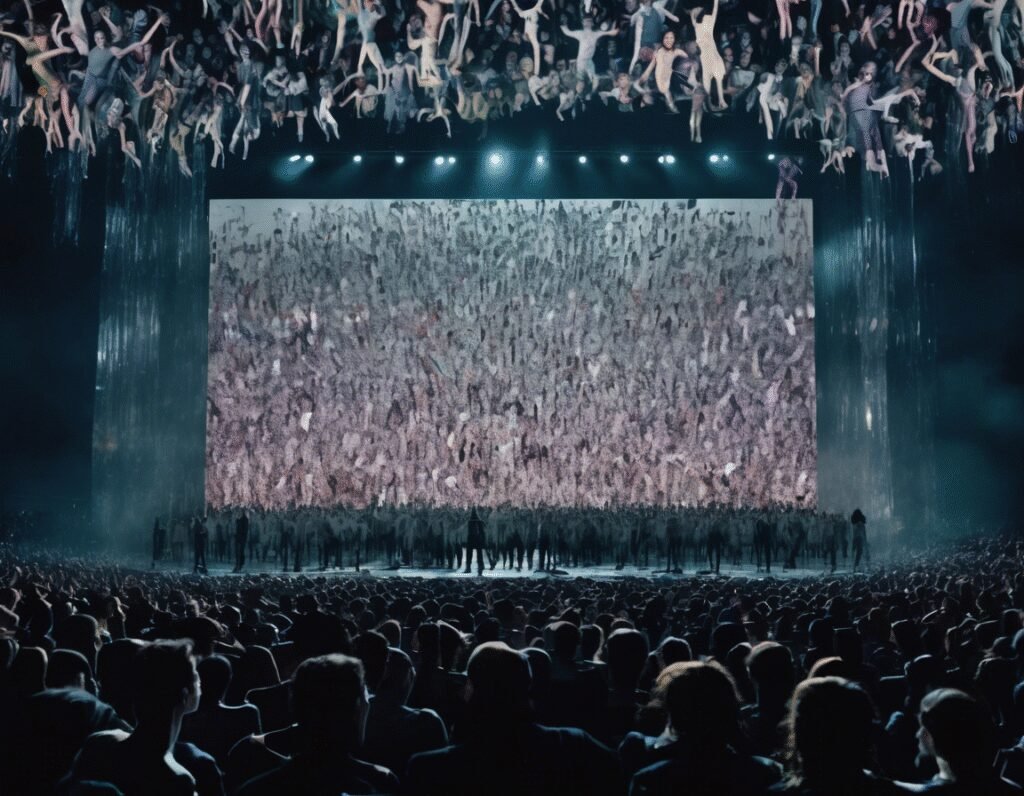

Last week, Will Smith became the latest public figure to fall victim to the uncanny valley of artificial intelligence, but this time, he was the one holding the tools. In a promotional clip for his Based on a True Story comeback tour, his team used AI to digitally generate a crowd of ecstatic, cheering fans. The intention was clear: to project an image of overwhelming success and vibrant energy for the 56-year-old rapper and actor’s return to the stage.

However, the execution was a catastrophic failure that did not go unnoticed by the internet. Instead of a seamless enhancement, the AI inserted a gallery of nightmare-fuel spectators. The digitally created fans appeared distorted and demonic, with facial features that melted into bizarre, inhuman shapes. Their signs, meant to praise Smith, were covered in garbled, nonsensical text that looked more like a cursed artifact than supportive messaging. This synthetic audience stood in stark, jarring contrast to the actual, and noticeably more subdued, crowd visible in other unedited videos from the tour.

The incident backfired spectacularly, generating exactly the kind of viral mockery it was meant to avoid. It served as a very public case study in the risks of deploying immature AI technology for reputation management. The move was seen as deeply inauthentic, a desperate attempt to manufacture hype that ultimately highlighted a perceived lack of it. This is not Smith’s first brush with AI weirdness, having previously been the subject of a viral meme that used the technology to show him engaged in the bizarre act of slurping spaghetti.

This event transcends a simple public relations blunder. It signals a new and unsettling frontier where reality is not just captured but actively constructed. For those in the crypto and Web3 space, this is familiar territory. The community has long been discussing digital authenticity, provenance, and how to verify what is real in an increasingly virtual world. Smith’s AI debacle is a mainstream, pop culture example of the very problems that technologies like blockchain aim to solve.

The episode raises critical questions that resonate deeply within tech circles. How will we trust digital media when AI can so easily generate convincing but entirely fake scenes? What does authenticity mean when a performer can artificially inflate their own applause? This is not a distant future problem; it is happening now. The tools to create synthetic realities are becoming cheaper and more accessible by the day.

While Smith’s team provided a clumsy and easily detectable example, the underlying technology is rapidly improving. The next iteration of such AI will not produce demonic fans with jumbled signs; it will create perfect, believable replicas of cheering crowds that are impossible to distinguish from the real thing. This poses a profound threat to trust in media, documentation of events, and historical record.

The conversation is no longer about if AI will disrupt our perception of reality, but how we will adapt. For a world built on digital interaction, the need for verifiable truth has never been more urgent. The solution may lie in the very principles championed by cryptography and decentralized systems: immutable ledgers, transparent verification, and proof of origin. Will Smith’s awkward concert video is more than a joke; it is a warning shot from the future, illustrating the urgent need to build a framework for trust before synthetic reality becomes indistinguishable from our own.